Upcoming Events:

- Check out our new Google Calendar for committee meeting times!

IGVC - Navigating our way to Competition Day

Welcome back to another semester of UT RAS’ intelligent ground vehicle team!

In the past few weeks (possibly days), we have made considerable progress in getting a working simulation and pathfinding algorithm up and running. Here’s just a snippet of the kinds of things we’re working on right now!

Gazebo

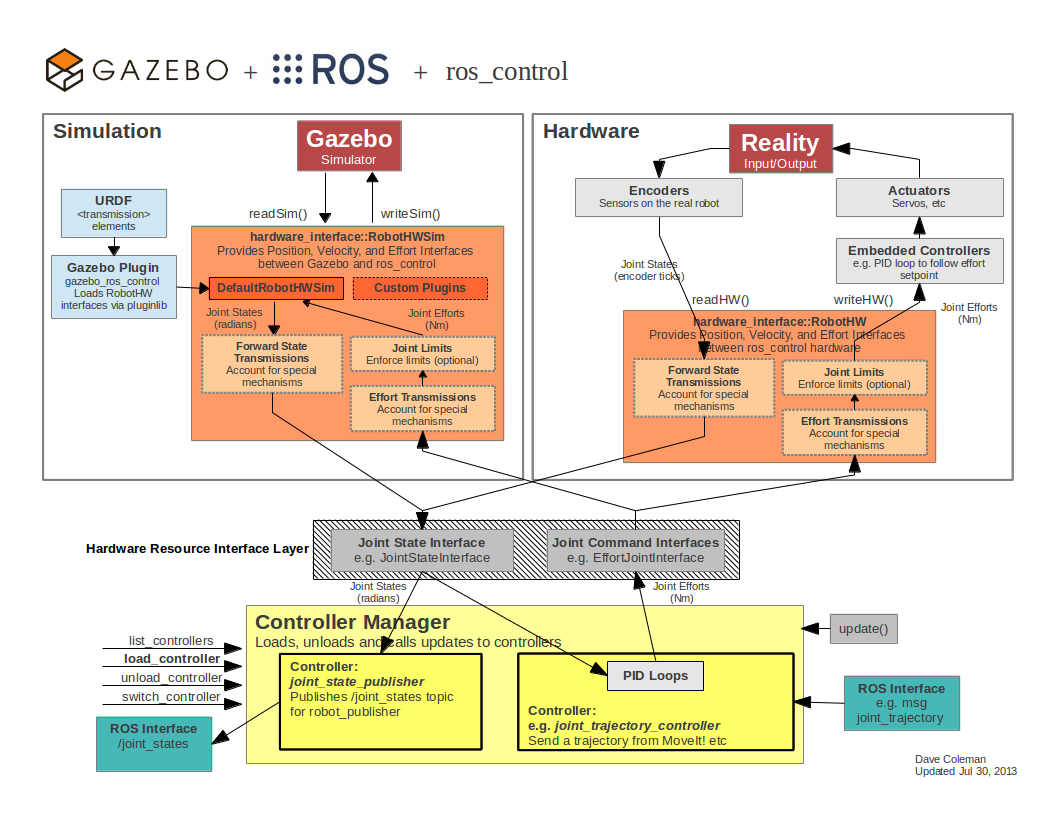

Simply put: Gazebo is an amazing tool for simulating real world environments, especially in relation to robotic projects. What is especially useful for us, is the fact that we can run the same working algorithms inside gazebo as well as in our actual robot! Gazebo is all about matching similar parameters found in the real world so that the transition from simulation to physical hardware is as seamless as possible.

Why bother with simulations to begin with?

Firstly, some of the hardware we do not currently have access to. We are working with a handful of companies to get our hands on a LIDAR sensor. Secondly, our robot chassis is abnormally large. Simulating this hardware makes simple testing a far easier process, compared to wheeling out the robot to say, a field or parking lot.

Our Simulation Process in a Nutshell

At first glance, Gazebo can appear to be a bit hectic. There’s a lot of new things thrown at you all at once and at times it can be overwhelming. Fortunately for us, the creators of Gazebo have produced dozens of thoroughly documented tutorials for our use. This is extremely useful for inexperienced users like us.

Step One: Creating a Model

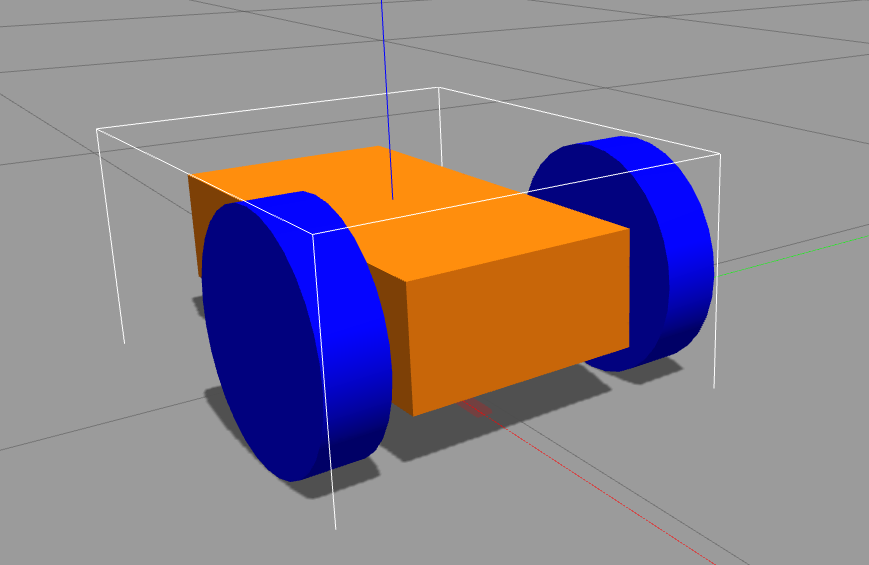

Gazebo uses a standard description format known as SDF, or Simulation Description Format. Read here to learn more about SDF as well as URDF, a description format that ROS uses. Here’s an example of a relatively simple differential-wheeled robot that is made using SDF.

For testing purposes, this satisfies our hardware requirements just fine. Just like this simulated robot, our bot also uses a differential drive setup, or motor controllers that can actuate wheels independently of one another.

Step Two: Adding Sensors to the Model

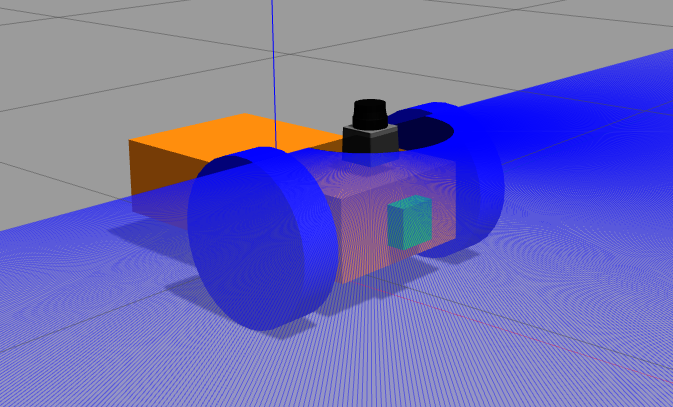

Adding sensors physically to the simulated robot is just as simple as adding wheels and casters to the chassis link. However, these attached sensors need plugins to work as they would in real life.

Luckily for us, Gazebo tutorials once again save the day. Writing the basic plugins for cameras and LIDAR scanners would be highly impractical. Here’s a list of all the plugins Gazebo already has implemented, including depth cameras, lasers, IMUs, and much more.

Step Three: Building an Environment and Path Planning in Real-time

This step by far is the most complicated step in the entire process. Not only that, but a lot of the work happens behind the scenes with ROS packages (move_base, GMapping, amcl, etc.) Despite these hurdles, we have managed to reproduce a simulation worth using in our testing process.

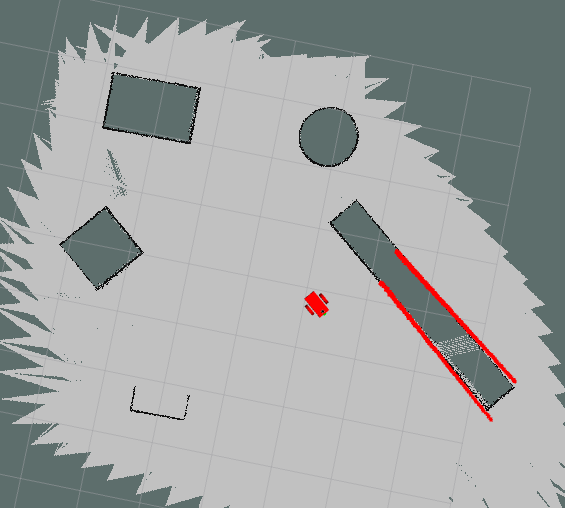

Firstly, we have to create a static map image of what the robot thinks is the environment. This is done by driving around the bot until it can “fill in” all the blanks it is uncertain about. A final result is shown in the image below. If you’ll look to the empty regions in the image, you’ll notice a sort of grainy texture on the edges of each object. We have implemented Gaussian noise in our sensor reading plugin to add a greater depth of realism to our simulation.

Once we have a map built, we are ready to use the amcl package and global/local path planners to navigate in real time. The following video shows a visualization of this entire process.

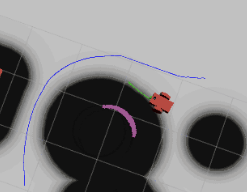

As the robot moves around, you’ll notice a couple of things. First off, each obstacle is surrounded with black circles in the Rviz window. These circles are combined to create a cost map for this specific environment. The black circles signify regions that are close to obstacles and should be avoided when possible. This acts as a buffer for the robot in case it misread where an obstacle was located. The sizes for these buffers can be changed.

If you look closely, you can also tell that there are two distinct lines or paths the robot is following and/or planning. The blue line indicates the global path, whereas the green is the local path. The global path is predefined based on the static map the amcl package is running off of. The local path on the other hand is constantly changing because it is measuring in real-time, the distance to each object with the simulated lidar sensor. You’ll also notice that the robot won’t follow the global path perfectly. This is due to the fact that the robot is seeing locations within its path that actually aren’t obstacles, despite what the global cost map is telling it.

Conclusions

One problem of using this method for path planning is the need for a pre-built map. At competition each heat, or run, will be randomized, so it is expected that our robot will have to navigate a new course every time. Mapping the field before hand is not a choice we have unfortunately.

Before we close, I would like to give credit to this blog in particular. Considering how complex this third step of the process was, it was nice to see working code published online.

Looking ahead, it is clear we still have a lot of work to do. Between fixing aforementioned problems and tweaking our lane detection software, our robot is far from finished.

I highly encourage anyone who is interested in working with IGVC to come check us out at the RAS office in EER 0.822! Slack channel: #igvc

Author: Ricky Chen